INFINITI Single Node

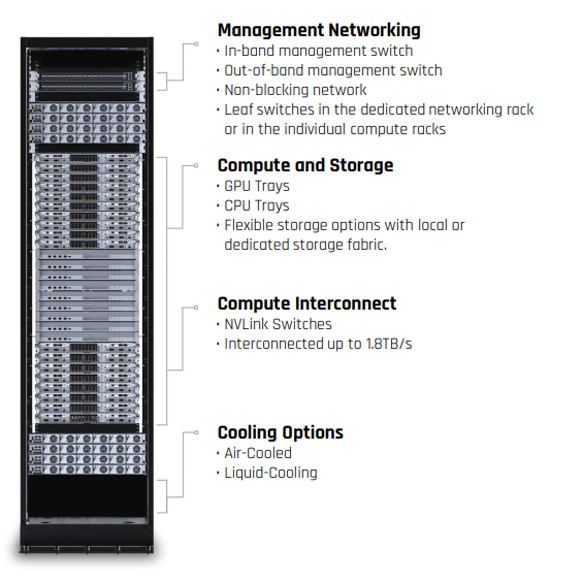

- Centralized deployment of compute, storage, and network

- High cost-effectiveness for single-machine inference scenarios

- Ultra-quiet deployment options to save energy and reduce noise

INFINITI is engineered to deploy across environments — from a single quiet node to a full intelligent computing cluster — with consistent management, storage, and networking semantics.

Select for model lines or for rack-level architecture, partners, and scalability messaging.

Infinity All-In-One System is designed to deploy across environments — from a single node to a clustered footprint — while keeping operations consistent.

Next-generation scalable unit — from proof of concept to full-scale deployment, including cooling, networking, management software, and onsite installation.

Designed for multi-trillion parameter AI models, scaling from all-in-one footprints to cluster topologies. Optimized for large-scale AI training, LLMs, and generative AI — with re-engineered interconnect, memory, storage, and cooling for significant performance gains.